Multi-Resolution Sparse Radiance Fields

sparf is a large dataset of Sparse Radiance Fields (SRFs) on multiple voxel resolutions: 323, 1283, and 5123.323 Voxels 1283 Voxels 5123 Voxels

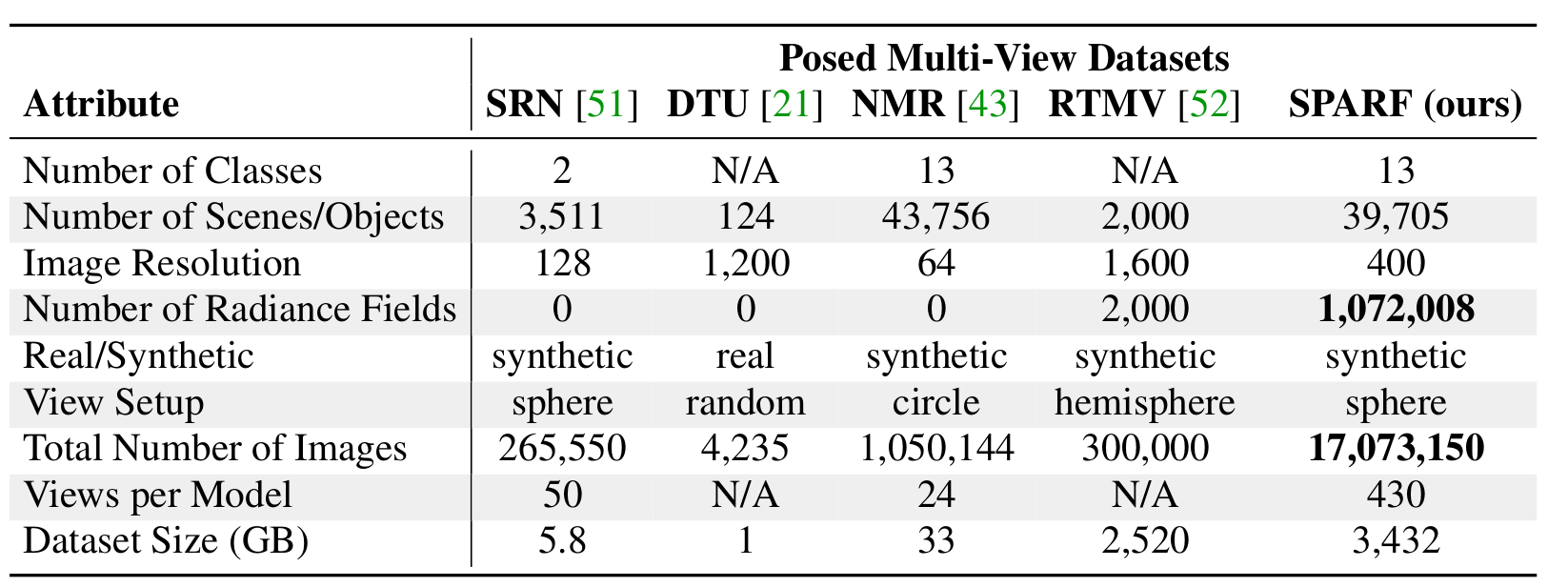

SPARF: Posed Multi-View Dataset

sparf is a large high resolution posed multi-view dataset, compared to other shapes datasets.

SuRFNet: Learning to Generate Sparse Radiance Fields

The SuRFNet pipelin consists of learning sparse convolution network conditioned on partial SRFs. The pipeline utilize a combination of losses on the genrated SRFs and a perceptual loss on the rendered images from the output SRF.

Mesh Extraction from SRFs

Since Sparse Radiance Fields (SRFs) are explicit 3D structures, obtaining high quality 3D meshes is straightforward.

Novel Views Synthesis from a Single View

SuRFNet naturally enables novel views Synthesis from a single view by cnoditioning the output SRF on the partial SRFS based on the few input views. This piepline achieves state-of-the-art on novel views synthesis on unseen shapes compared to recent baselines (PixelNeRF, and VisionNeRF).

Related Links

For some more 3D radiance fields generation works, please also check out

DreamFusion: Text-to-3D using 2D Diffusion performs text-guided NeRF generation by 2D Diffusion. They propose Score Distillation Sampling in order to optimize samples via diffusion which could potentially also been applied to other modalities than text.

LION: Latent Point Diffusion Models for 3D Shape Generation introduces a hierarchical approach to learn high-quality point cloud synthesis that can be augmented with mdoern surface reconstruction techniques to generate smooth 3D meshes.DiffRF: Rendering-guided 3D Radiance Field Diffusion is a denoising diffusion probabilistic model directly operating on 3D radiance fields and trained with an additional volumetric rendering loss. This enables learning strong radiance priors with high rendering quality and accurate geometry.

BibTeX

@InProceedings{Hamdi_2023_ICCV,

author = {Hamdi, Abdullah and Ghanem, Bernard and Nie{\ss}sner, Matthias},

title = {SPARF: Large-Scale Learning of 3D Sparse Radiance Fields from Few Input Images},

booktitle = {Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV) Workshops},

month = {October},

year = {2023},

pages = {2930-2940}

}